High quality data drives decision-making and boosts productivity — but, unfortunately, it’s hard to come by. Anyone who works with data will be familiar with the struggles of poor data organisation, inconsistencies, inaccuracies, and redundancies. One or two inaccuracies are a simple fix, but when you’re overwhelmed by huge quantities of data, it can become a losing battle.

This is complicated further by the fact that data often needs to be synchronised across different sources and formats. Add to that the stress of maintaining security, and many of those tasked with data analytics are ready to throw in the towel. But hang in there — in this article, we’ll show you how to avoid the most common data quality problems so your business can start gaining accurate insights.

What causes data quality issues?

Before we jump to the solutions, let’s take a look at some of the most common causes of poor data quality:

- Human error: Whether data is entered by employees, customers, or survey respondents, human error is the most common cause of quality issues. Entry mistakes can result from carelessness, failure to follow guidelines, or a lack of clear guidelines to begin with. In cases where responsibility over data collection is undefined, organisations often have to deal with the added complication of duplicate data.

- Systems issues: Collecting quality data is a challenge, but integrating it can be even more problematic — especially when multiple systems and SaaS platforms are involved. Most organisations need to compare information across different silos, meaning that data from CRM systems, billing platforms, and marketing software must be integrated, even when they weren’t designed to be. Similarly, any time a new system is adopted, legacy data must be migrated in a process that often results in losses and inaccuracies. This is complicated further by application and platform updates.

- Data degradation: Over time, data typically becomes outdated and therefore inaccurate. Figures and survey results are constantly shifting, while personal information such as names, addresses, and contact information often changes. Preventing this obsolete data from accumulating in databases requires time and resources that many organisations overlook.

What impact does low quality data have?

Business data is far more than just a record to refer back to: It can be transformed into reports giving insights into everything from costs to performance. These insights can be used to address inefficiencies and generate forecasts that inform major business decisions.

So, what are the business costs or risks of poor data quality? In short, without accurate insights to guide them, business leaders lose the ability to make informed, data-based decisions, and strategy and bottom lines suffer as a result.

With so many factors involved in data collection and analysis, it is inevitable that errors will occur. In cases where erroneous data is used to inform big decisions on budgets and contracts, or when it masks problems that could otherwise have been mitigated, this can be disastrous.

Data-driven reports and dashboards, like those delivered in Power BI, are also used to identify inefficiencies. This, in turn, enables cost reductions and productivity gains. If these reports are inaccurate or unavailable, organisations not only miss out on improving their operations, but also waste valuable time chasing and correcting the data. In cases where an expensive consultant is handling these issues, the costs can be crippling. As well as being inefficient, this break-fix approach often introduces human error into the equation and rarely solves the root cause of the problem.

As well as resulting in lost time and money, poor data quality can lead to regulatory issues. Many organisations are bound by industry standards and legal obligations, most of which call for complex reporting. Low quality data is likely to produce noncompliant reports — the consequences of which can range from fines and loss of funding to withdrawal of business licenses, tarnished reputations, and even criminal charges.

How to improve data quality

At this point, you’re probably wondering how to mitigate data quality issues. There are three core elements to address:

1. Persons

To reduce human error in data collection and entry, you should clearly define the responsibilities of your employees, as this creates accountability, traceability and competency, while avoiding duplication.

Roles should include:

- The data owner, who is in charge of the final data, including its collection, quality, security, and uses. They define requirements, ensure data integrity, and provide access to the data under their remit.

- The data steward, who oversees data governance. This includes referencing and aggregating data, ensuring data is fit for purpose, reviewing data quality, and coordinating data delivery.

- The data engineer, who handles technical tasks including developing systems to meet the requirements of the data owner, implementing access controls, storing data, and managing the technological infrastructure.

All employees who have access to the data should undergo regular training so they understand these roles and processes. Since cross-silo insights demand cooperation and collaboration between teams, interdepartmental goal setting and meetings should be encouraged. If you need help with this process, DWC provides Power BI training for employees and the data team.

2. Processes

Defining clear guidelines and standards for data management will help you establish and maintain data quality. Adopt best practices and develop operational processes that follow the “data quality cycle”. Similar to the methodology used by DWC, this iterative procedure begins with defining your goals, including establishing what metrics are required and which fields should be mandatory. It then requires you to define set rules for measuring, monitoring and analysing your data.

3. Technology

Get to know which technologies your business needs to gather high quality data. This will depend on the intended use of the data — reporting, predictive analytics, performance management, dashboard development, etc. In Excel and Power BI, Power Query can be used to clean data, while Power Pivot can be used to analyse and model it accurately.

If you’re wondering how to detect data quality issues, it is possible to build automated error checking and validation into the data pipeline. This ensures that data is tested as it is processed, ensuring inbuilt quality. After erroneous or invalid data has been identified, it can be filtered out, fixed, or logged depending on its significance, which can be defined by your inbuilt error handling process.

Customised data quality and code validation/logic tests can be built into your pipeline by data engineers. Rule definitions and built-in corrections can be used to streamline this process further, and reports on data quality can help you to evaluate and improve the process over time. If you require help with this process, you can enlist DWC’s expert Power BI consultants.

Start gaining accurate business insights today

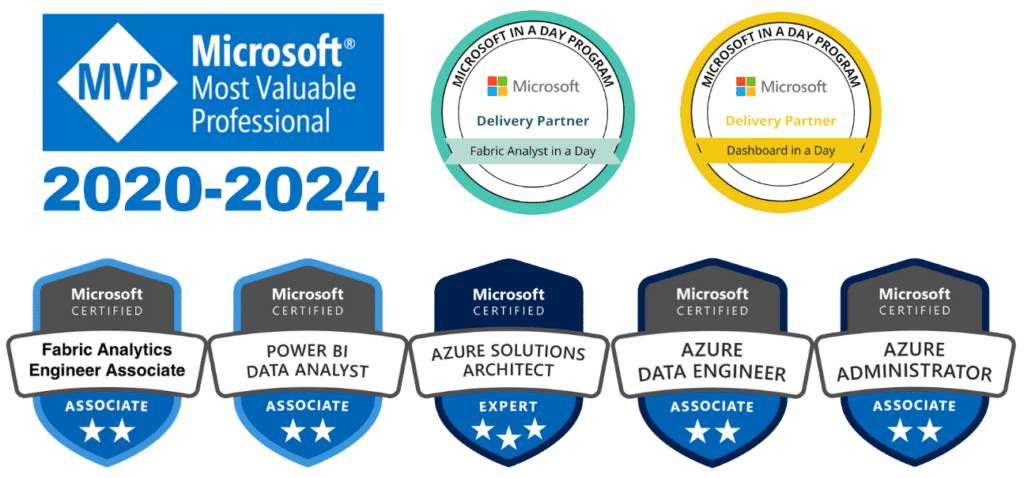

Now you know how to resolve data quality issues, it’s time to put this knowledge into action. If you need assistance, Dear Watson Consulting is here to help. As experts specialising in Power BI, we can develop your data pipeline and processes, so you start gaining accurate insights from your business data. Contact us today to find out more about our BI consulting and training services.